Amazon has it’s place as a supplier, but for many things I’d like to avoid their marketplace and buy directly. So, I’m making a public list for myself so I know where else I can look for suitable alternatives.

Please comment if you know good sources for other products, it will help me and maybe help others. Thanks

| Name | URL | Products |

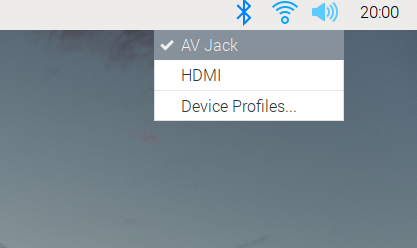

| Kenable | https://www.kenable.co.uk/en/ | Cables and IT related (computer, audio, network) and accessories. Example, HDMI 2.0 cable, £3 each inc VAT & post £1.07 |

Reasons I am trying to avoid Amazon:

Their product listing and search is awful.

My experience is the product search results are often for different items than the search term implies. Sponsored listings appear to be prioritised over more correct results.

I don’t believe they pay their morally fair share of tax

Tax is complicated. I have no idea how to solve that. Multi nationals will always move money to the lowest possible tax environment before paying dividends to shareholders. That is the nature of our imperfect global tax system and not an Amazon issue alone. Therefore I feel using smaller UK based businesses or wholesalers for a similar spend is likely better in relation to funds reaching our government that can then be spent within our society. Example: https://www.taxwatchuk.org/amazon_tax_cut/ “Amazon UK Services Ltd 2019” Profit: £101m, Tax: £6.3m. 6% doesn’t feel like a fair tax rate to me.

They have issues with counterfeit products

They have a system of collocating stock from different sellers in their warehouse if the item is the same. If a seller sends in 100 fake memory cards their fake stock may be sold in place of a seller that sent in 100 genuine memory cards. Even without the colocation, Amazon has a poor reputation for ensuring the products they offer via their market place from third party sellers are safe or legal to sell.